This blog post is Human-Centered Content: Written by humans for humans.

Ask one journalist to research a story, write it, take photos, fact-check and copy-edit and you’ll get work that’s “fine.” Ask a newsroom to do it and you’ll get something worth reading.

The same logic often applies to AI.

Most of us start in lone-ranger mode. We hand one AI a complex task: Research this topic, write about it, check your work, adjust the tone. For simple tasks, that works. A well-crafted prompt produces useful output, and everyone’s happy.

But somewhere around “complex,” it often breaks down. Not because the AI isn’t capable, but because we’re asking one agent (a single AI instance with a single job) to split its focus across too many competing responsibilities at once.

The research isn’t deep enough. The writing is technically correct but flat. The quality feels passable rather than good. We can try to keep iterating on the prompt, but the real issue is the architecture.

The Shift: From User to Manager

The newsroom model is a fundamentally different way of thinking about AI workflows. A newspaper doesn’t have one employee who writes the story, takes the photos, checks the facts, designs the layout and edits the copy. It has a reporter, a photographer, a researcher, a copy editor and a layout designer. Each has a defined role. Each is handed a clear piece of the work. Each optimizes for their specific job without worrying about anyone else’s.

Working with AI, you need a mindset shift. You’re not a user anymore. You’re a managing editor.

This isn’t just a metaphor. It’s how the most effective AI workflows we’ve built actually operate. Narrow instructions often produce better output than broad ones, because each agent can optimize for its specific job without competing priorities pulling it in different directions. The researcher doesn’t have to worry about tone. The writer doesn’t have to worry about fact-checking. The critic doesn’t have to write.

The newsroom figured this out a long time ago. We’re just applying the same logic to AI.

What the Architecture Actually Looks Like

There are a lot of different ways that you could divvy up the work of your system, and it will look different for each AI implementation, but for our example, let’s think through a simple system to create an article.

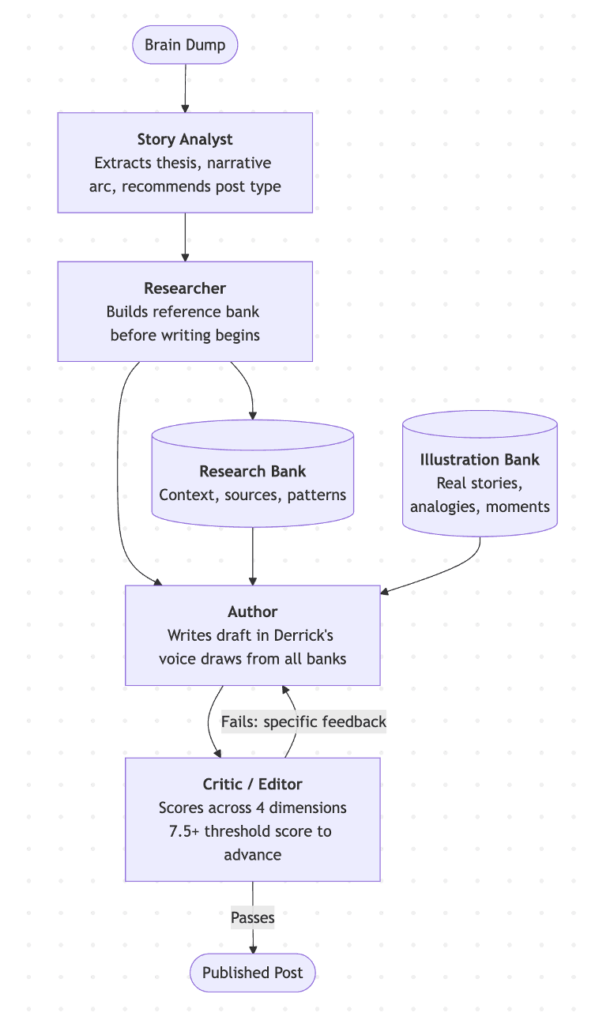

We could have four agents, four jobs, one handoff at a time:

- Story Analyst: Reads the raw input, extracts the core idea, structures the narrative arc, flags gaps

- Researcher: Builds a deep pool of reference material before the writer touches a word

- Author: Writes the draft, drawing from both the story structure and the researcher’s “research bank”

- Critic/Editor: Evaluates the draft, scores it, edits it or sends it back for edits

As you play with an implementation like this, you’ll notice a lot of unique elements that jump out in the implementation. It will take some tweaks and trial and error to determine the exact best flow for your setup. Here’s what the flow looks like for this thought experiment:

Here are a few key ideas, though:

The research bank changes everything.

When a single AI is asked to research and write in the same pass, the research ends up thin. The agent is already thinking about the draft before it’s finished gathering. Separating the roles fixes that. Our researcher’s only job is to build a deep pool of material before the writer touches a word: Relevant context, source content, patterns worth weaving in, things a reader would expect to see addressed. By the time the author starts, it’s working from a well-stocked pantry instead of an empty refrigerator. The writing reflects that. If you’re writing in a particular “niche,” you might be able to feed the researcher with quality locations to obtain data as well. Other blog sources, books, and websites are all great content for the research agent to consume.

The scoring loop is the underrated piece.

Most conversations about multi-agent AI focus on the role specialization. The quality gate gets less attention, but it might matter most. Our critic agent evaluates each draft across multiple dimensions: Is this the right type of content for the goal, is the quality where it needs to be, is it calibrated for the right audience? Each dimension gets a score. If the draft doesn’t clear the threshold, it goes back to the author with specific, actionable feedback.

Ask your agent to output specific reasons why a particular section isn’t working, with suggested fixes, not simply “rewrite this.”

This is how the system produces consistent quality without a human reviewing every draft. It doesn’t advance until it passes. You’re not hoping it turned out well. You have a structured reason to know it did.

The illustration bank compounds over time.

This one surprised me the most. Alongside the research bank, I like to maintain a separate library of real-life stories/examples, concrete moments and useful analogies. Find a funny news story, a quality blog post or memorable quote in a book? Write it down! Look for anything remotely worth pulling into future articles when the right moment comes. As interesting examples surface from projects, client conversations or things just worth remembering, put them into the bank. The author agent searches the idea bank when a concrete example would sharpen a point.

What makes this area powerful isn’t any one entry. It’s the accumulation. Stories you’d forget by the time they’re relevant are preserved and surfaced automatically. Six months from now, that bank is worth significantly more than it is today. It’s institutional memory that actually gets used.

Why the Mental Model Matters More Than the Tools

The frameworks to build these workflows exist specifically because the industry has converged on multi-agent as the solution to single-agent ceilings. This isn’t experimental territory anymore. It’s where the field has landed.

Want to set up a system like this? You don’t have to do much work at all! Simply explain what you’re going for to Claude Code, or a similar agentic framework and it knows what you mean and can layout the system for you.

The barrier most teams are hitting right now in AI isn’t technical. It’s a mental block. They need a mindset shirt.

As long as we think about AI as a freelancer we direct with prompts, we’ll keep hitting the ceiling of what a single agent can produce. The shift from AI-as-tool to AI-as-team is what changes the output. You’re not crafting a better prompt. You’re delegating to a researcher, a writer, a critic, an editor, each of whom has one job and does it well.

A newsroom doesn’t produce great journalism because it has talented people. It produces great journalism because it has the right structure: Clear roles, clean handoffs, quality checkpoints and a managing editor who keeps it moving. The talent matters, but so does the system.

That same structure is available to anyone building with AI today. The question worth asking isn’t, “How do I get a better response from this AI?” It’s, “What does this workflow look like if I treat it like a team?”

Most people who ask that question don’t go back to the lone-ranger approach. By leveraging a team of agents instead of a single threaded workflow, you too can supercharge your AI experience.