This post covers how to automate the fetching and pushing of a Matillion project’s local git repository with its remote counterpart using the Python Script component. If you would prefer, I also have a similar post that leverages the Bash Script component.

Requirements

- Your Matillion project must already be configured with remote git integration.

- Your Matillion API user and private git SSH key must be stored appropriately on your Matillion virtual machine.

Key Goal

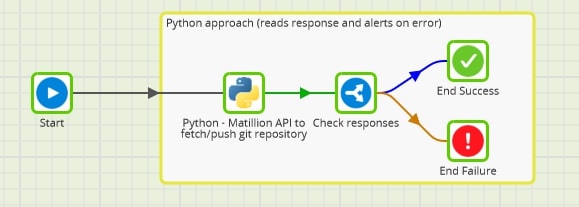

The key goal of our script is to hit the scm/fetch and scm/push endpoints for a given Matillion project, as described in Matillion’s documentation. We can store the success/failure responses in Matillion variables and then use an IF component to report on whether the job was a success or a failure. This additional functionality is not currently possible with the Bash equivalent as the Matillion Bash Script component does not yet support writing to Matillion variables.

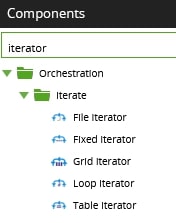

Once this is working, you could use one of Matillion’s iterator components to cycle through multiple projects, scheduling this to execute daily and automatically sync all of your Matillion git repositories:

Python Script Overview

The script below performs the following steps:

- Import the modules required for this script. All of these modules are native, so nothing needs to be installed beforehand.

- Prep and authentication

- Retrieve the password for the Matillion API service user from the VM backend.

- Convert this username and password combination into the base64 encoded string for API authentication.

- Retrieve the private SSH key for git authentication from the VM backend.

- URL-encode the name of the project that requires this git sync, along with the group that the project belongs to.

- Configure the authorization component of the JSON body that will be sent to each of the API endpoints.

- Configure the headers that will be sent with the API requests.

- Configure the URLs for the API requests, leveraging the local references as this script will be executed from within a Matillion orchestration job.

- The fetch API request

- Prepare the fetch options: removeDeletedRefs and thinFetch.

- Execute the fetch API request.

- Store the fetch response in a Matillion variable.

- The push API request

- Prepare the push options: atomic, forcePush and thinPush.

- Execute the push API request.

- Store the push response in a Matillion variable.

Once the script has executed, we can then read the values of our two response variables and leverage a Matillion IF component to flag whether or not the job was a success.

Complete Python Script

Here, you can find the complete script. Naturally, you may need to update certain file paths and file names to get this working for your own use cases:

# Python3 - Automated Matillion Git Sync

## Description

# This is a Python3 script to read Matillion api-user credentials and git SSH private key

# from files in the linux backend and pass them into the Matillion API endpoints

# that fetch from and push to the remote repository

## Modules

import urllib.parse

from datetime import datetime, timedelta

import os

import http.client

from base64 import b64encode

import json

## Prep and authentication

### Retrieve password for the Matillion user called api-user, and prepare it for API authentication

api_user_name = 'api-user'

api_user_password = open(os.path.expanduser('/matillion_service_account_users/{0}.txt'.format(api_user_name))).read().rstrip('\n')

api_auth_raw = '{0}:{1}'.format(api_user_name, api_user_password)

api_auth_encoded = b64encode(bytes(api_auth_raw, 'utf-8'))

api_auth_decoded = api_auth_encoded.decode('ascii')

api_auth = 'Basic {0}'.format(api_auth_decoded)

### Retrieve SSH private key

ssh_key = open(os.path.expanduser('/ssh_keys/matillion/id_rsa_gitlab')).read()

### URL encode group and project

group_encoded = urllib.parse.quote(GROUP)

project_encoded = urllib.parse.quote(PROJECT)

### Configure API auth

api_body_auth = {

"authType": "SSH",

"privateKey": ssh_key,

"passphrase": ""

}

### Configure API headers

api_headers = {

"Authorization": api_auth,

"Content-type": "application/json"

}

### Configure the URLs for the API requests

instance_address = '127.0.0.1'

instance_port = '8080'

endpoint_path = 'group/name/{0}/project/name/{1}'.format(group_encoded, project_encoded)

fetch_endpoint = '/rest/v1/{0}/scm/{1}'.format(endpoint_path, 'fetch')

push_endpoint = '/rest/v1/{0}/scm/{1}'.format(endpoint_path, 'push')

## Fetch

### Prepare fetch options

fetch_options = {

"removeDeletedRefs": FETCH_OPTION_REMOVE_DELETED_REFS,

"thinFetch": FETCH_OPTION_THIN_FETCH

}

### Prepare body of the fetch API request

fetch_body = {

"auth": api_body_auth,

"fetchOptions": fetch_options

}

### Execute fetch API request

print('\n-------------FETCH START-------------\n')

fetch_conn = http.client.HTTPConnection(instance_address, instance_port)

fetch_conn.request("POST", fetch_endpoint, json.dumps(fetch_body), api_headers)

fetch_res = fetch_conn.getresponse()

fetch_data = fetch_res.read()

fetch_response_json = json.loads(fetch_data.decode("utf-8"))

print(fetch_response_json)

### Store fetch response in a Matillion variable

fetch_success_text = fetch_response_json['success']

context.updateVariable('FETCH_SUCCESS', fetch_success_text)

print('\n--------------FETCH END--------------\n')

## Push

### Prepare push options

push_options = {

"atomic": PUSH_OPTION_ATOMIC,

"forcePush": PUSH_OPTION_FORCE_PUSH,

"thinPush": PUSH_OPTION_THIN_PUSH

}

### Prepare body of the push API request

push_body = {

"auth": api_body_auth,

"pushOptions": push_options

}

### Execute push API request

print('\n--------------PUSH START-------------\n')

push_conn = http.client.HTTPConnection(instance_address, instance_port)

push_conn.request("POST", push_endpoint, json.dumps(push_body), api_headers)

push_res = push_conn.getresponse()

push_data = push_res.read()

push_response_json = json.loads(push_data.decode("utf-8"))

print(push_response_json)

### Store push response in a Matillion variable

push_success_text = push_response_json['success']

context.updateVariable('PUSH_SUCCESS', push_success_text)

print('\n---------------PUSH END--------------\n')