When using Matillion to load data into Snowflake, temporary stages are often created in Snowflake to store the data before it lands in the destination table. Often, this is not an issue as Matillion provides a handy load option called “Clean Staged Files,” which cleans up these stages. For example, this is a possible load option when using the Database Query component.

However, there may be occasions where the “Clean Staged Files” load option has been disabled and you have a large number of files building up in your Snowflake environment. Alternatively, you may find that you end up with a lot of empty stages that do not contain any files but still exist and lead to confusing bloat when administrating your Snowflake environment. In this brief guide, we will walk through how to remove these stages.

Prerequisites

- ACCOUNTADMIN privileges to your Snowflake environment, or equivalent privileges to query the SNOWFLAKE.ACCOUNT_USAGE.STAGES table in the shared SNOWFLAKE database for your account

- MODIFY privileges on stages created by Matillion; most easily achieved by having access to the role that Matillion leverages or one of its parent roles

Dynamically Creating DROP Statements

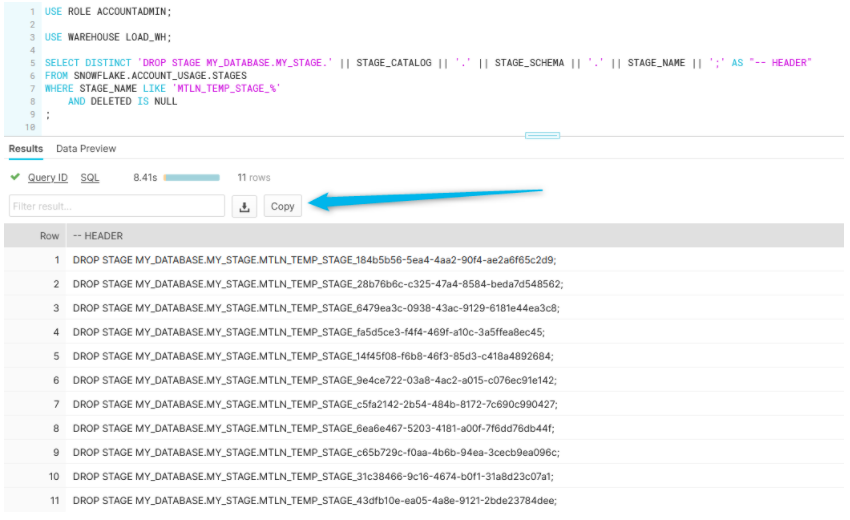

The following SQL code can be executed in Snowflake to query the SNOWFLAKE.ACCOUNT_USAGE.STAGES table and generates a series of statements to DROP each temporary stage:

SELECT DISTINCT 'DROP STAGE ' || STAGE_CATALOG || '.' || STAGE_SCHEMA || '.' || STAGE_NAME || ';' AS "-- HEADER"

FROM SNOWFLAKE.ACCOUNT_USAGE.STAGES

WHERE STAGE_NAME LIKE 'MTLN_TEMP_STAGE_%'

AND DELETED IS NULL

;

You may wish to add a few more conditions to the WHERE clause depending on your use case. This statement will yield a result similar to the following screenshot:

See that Copy button the big blue arrow is pointing to? This will allow you to quickly copy the output of your query. You can then paste it into a new worksheet and execute it. This will quickly and cleanly delete all of those temporary Matillion stages. We’ve even commented out the header record already.

And so concludes this short and sweet guide. I hope you find it useful!