One of my least favorite phrases currently being used in the analytics world is “data science.” This is ironic since “data science” is in my official InterWorks job title. But every time I hear the phrase, I get a little knot in my stomach similar to the one I feel on a turbulent plane.

Five years ago, data science was the hottest term in the industry thanks to the “Harvard Business Review” designating it as the “sexiest job of the 21st century.” But a lot of great things have happened ever since this title was applied to data science. Companies started to invest in skill sets like statistics and machine learning. Academic institutions continued to create cross-disciplinary majors and programs to fit the changing landscape of business needs, of which I am a beneficiary.

The Sexiest Job in Data

A whole class of otherwise ignored statisticians and computer science workers were now in high demand. But as with many things that are over-hyped, cracks began to emerge as the rush for data-science skills continued. Those cracks have led us to where we are today. Businesses are now starting to re-evaluate their investments after a small amount of ROI, all because data science was over-hyped.

Don’t get me wrong, a lot of companies are doing really cool things with their data science teams. Here at InterWorks, we’ve been lucky enough to help in some of those endeavors. But there is generally a lot more noise now. Anyone who has a pulse and has had a statistics class labels themselves as a data scientist.

In an effort to be included in the hype, companies are constantly rebranding what used to be known as “reporting” as data science. All of this has made the job of deciphering value from analytics much harder. However, hype is not the only reason why companies are questioning their investments in data science. These issues are due to compounding problems across all job types, data scientists included.

Why Do Data Science Projects Fail?

I’ve narrowed down five main reasons why data science projects fail:

Management

Data science is not report development. If you apply the same standard management techniques from one project onto another, your project will end in failure. The very premise of data science work does not guarantee you will receive valuable results. Therefore, each data science project requires a different framework and understanding to properly manage.

Along the same lines, data science outcomes have to be measured differently. A single path of analysis won’t always prove predictive power, but this is not a failure. Data scientists cannot guarantee a single model will have predictive power, but understanding this concept can still provide valuable insight. This is where traditional development value systems fail data science.

Data

There are three reasons why your data is the problem:

- Data Quality: The saying heard around the world now is, “If you put garbage in, you get garbage out.” Never is that phrase more true than in data science today.

- Data Availability: Data science projects almost always turn into lengthy data engineering ones. This is because the data hasn’t been sent to an analyst. Just because data exists does not mean it is available for analysis.

- Data Speed: You might have all your data in the same place, but you still have a data science team working on individual laptops with only 16GB of RAM. If your data environment is not performant, neither is data science.

Tools

Data science requires using the right tools for the job. Unfortunately, the available tools for statistical analysis and machine learning are always changing. Far too often, data scientists are stuck using outdated tools and environments, because they don’t know how to use anything else. This directly limits the type of work that can be done. Embracing modern, flexible infrastructures and solid platforms for experimentation is the key to enabling your data scientists’ success.

Attitudes

Even in the age of “Moneyball,” many in leadership simply want to rely on instincts or outdated reports to make decisions. Data alone can make it very easy to create narratives to fit preconceived ideas. As analysts, we bring those preconceived ideas to the table too soon. Furthermore, it can be hard to go from trusting yourself or others to trusting a faceless algorithm. In any organization, there’s a lot of work needed to create better understanding and consistently deliver value overall.

We (Data Scientists) Are to Blame

Whose fault is it if an audience does not understand the plot of a play? In the world of data, it is the performers, writers and producers of the play who bear responsibility. As data scientists, we can only blame ourselves if our business users and leaders do not understand the value of what we do.

Like a younger version of myself at a buffet, we also get overzealous and try to eat all the food at once. We want ideal solutions to business problems all at the same time. Instead, we create projects that never come to a satisfying result. We should be focusing on the simple solution that solves 80% of the problem in a fraction of the time instead of tackling the entire project with a complex solution over a long period of time.

Analysis for the sake of analysis might as well be paralysis. Data science is supposed to start and end with the business. This requires data science teams to constantly work and consult with business leaders to make sure their outputs are solving real problems and not made up ones.

Even if we are tackling the right business problems, the solution must deliver value. Like the original Netflix Prize, it’s typical to create a winning solution that can never be used by the business due to its complexity, performance characteristics and changing market conditions.

Above: The 2009 winners of the Netflix Prize.

Increase Your Return on Investments

I have three suggestions if you’re not seeing ROI. First, everyone who has bought into the data science hype must be realistic about what is possible in organizations. Business leaders are not always aware of the true situation on the ground. They may think their data is ripe for insights and predictive models, but the reality is that the data is not even good enough to trust the numbers in their weekly reports.

If the data foundation is weak, it is time to reinforce it or get a new one. Start with a firm evaluation of your data and data infrastructure. These will help you understand where you are now and what investments you need to ensure future productivity.

Second, many companies do not have the in-house skills needed to complete successful data science projects. Even if you have the budget to hire those skills, you may not have the experience to fully vet those incoming applications. Additionally, many internal analysts want the “sexiest job,” but beware the large learning curve that data science requires and proceed with caution. Consult with experts and set up realistic trial projects for new recruits or employees.

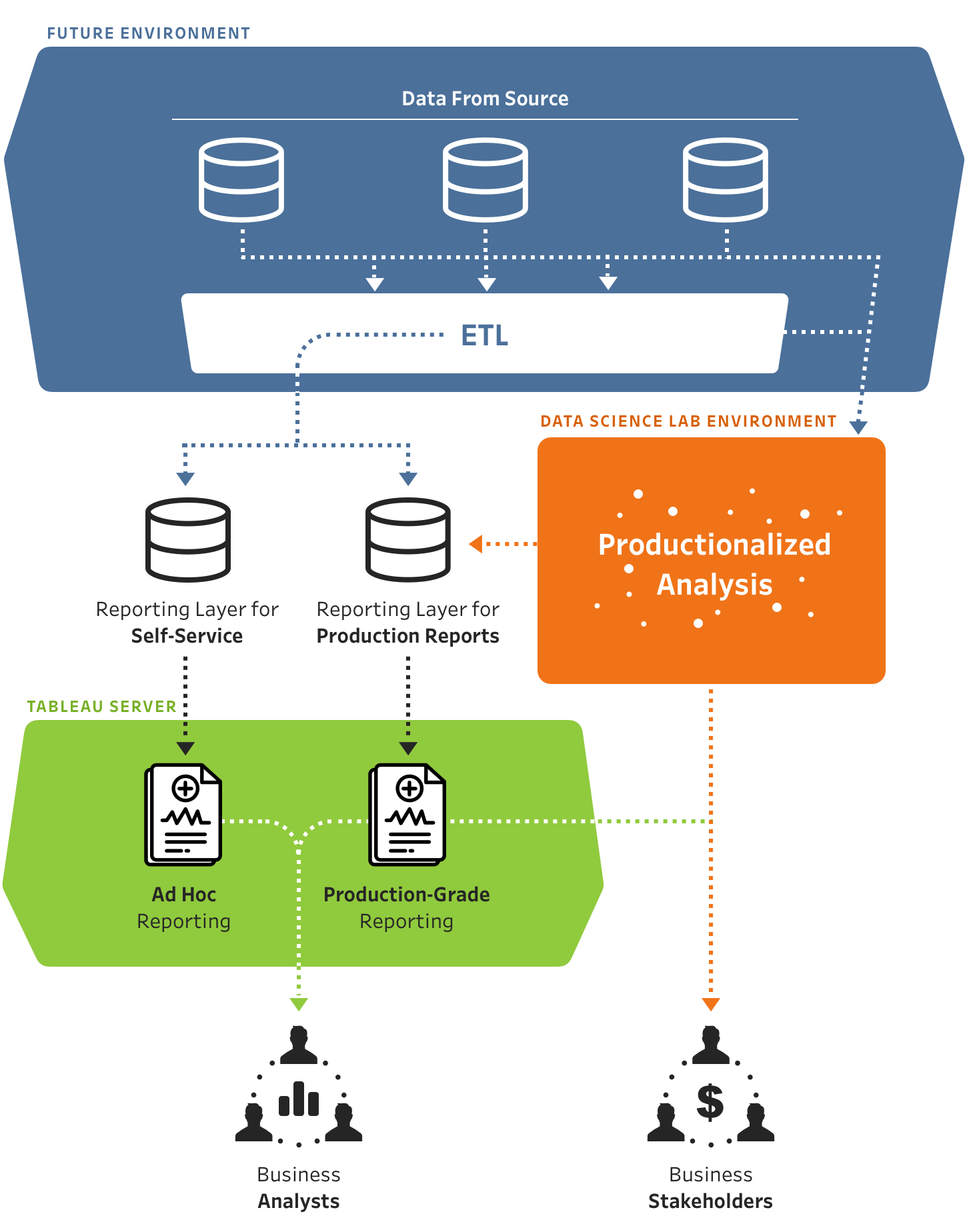

Above: An ideal data-science flow-chart.

And third, assuming your organization has the skills, it is likely time for new rules of engagement. Tighter communication between stake holders and data scientists is a must. Many times I see organizations with business leaders explaining to data scientists what is possible with data instead of the other way around.

It’s likely time for someone from your internal data science team to take a more active role in the business to create stronger partnerships. Data science cannot be treated like a traditional IT function, or worse, a silo on its own. It must involve much more collaboration. Invest in your organization by setting up the proper business processes and infrastructure to enable tighter collaboration.

These suggestions are not all encompassing, and we’ll be digging deeper into them in the future, but this is a start. If you find yourself ready to get started diving into your own data, send us a note and we’d love to figure out the next steps together.