I have an ongoing reel playing in my mind about the future of business intelligence that I’d like to share with you all. It’s a dream about the future that I’ve been having since I first started using Tableau in 2007. The dream looks like this:

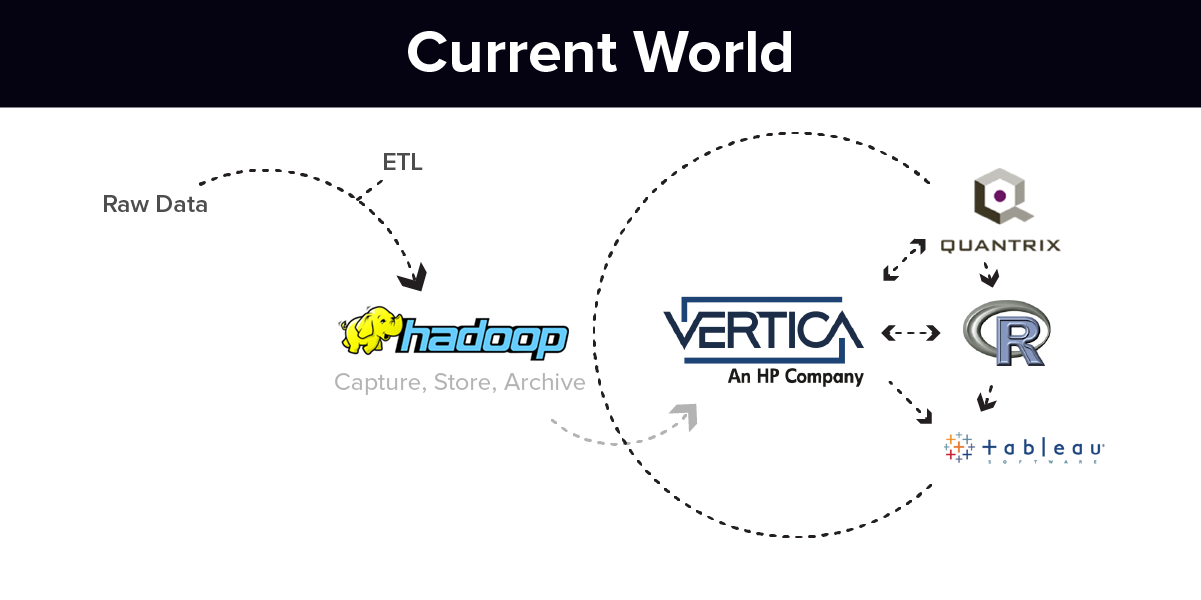

The vision is an ecosystem of tools that allow for self-building, easily-accessible and highly visual data. Initially, the components of the tool set might look like this:

- A data capture and storage system (Hadoop)

- A data visualization system (Tableau)

- A data modeling system (Quantrix Modeler)

- A fast ETL tool (Syncsort)

- A fast columnar analytic database (Vertica)

Excel and Access would still be part of the set. Other tools like Alteryx (more complicated blending) or Metric Insights’ Push Intelligence for Tableau (push dashboards) could be added for those that need those capabilities.

The idea is that even small entities will be generating a lot of data, and public data sources will be more commonly blended with internal (proprietary) data sources than before.

This model works right now. Data can be ingested without building schema in Hadoop. Once a domain of information is desired, schema can be created in Vertica to create a highly-performant structure for analysis.

Forecasting models can be made in a modern spreadsheet. Using very granular data from Vertica, you can model these future scenarios in Quantrix (a spreadsheet that builds formulas based on dimensions and measures, not cell-based formula logic).

If very rapid loading is required, Syncsort can be added to speed the loads to Vertica, Hadoop or both. Hadoop can also be used to archive historical data that is not used frequently. Tableau is then the visualization layer for the entire system. That kind of setup works today and is relatively economical.

The Future Will Be Easier

While this collection of tools works today, it’s still too costly and too technically demanding for smaller entities to deploy. Only small ones with the technical chops, large data volumes and a business imperative will deploy this collection of tools.

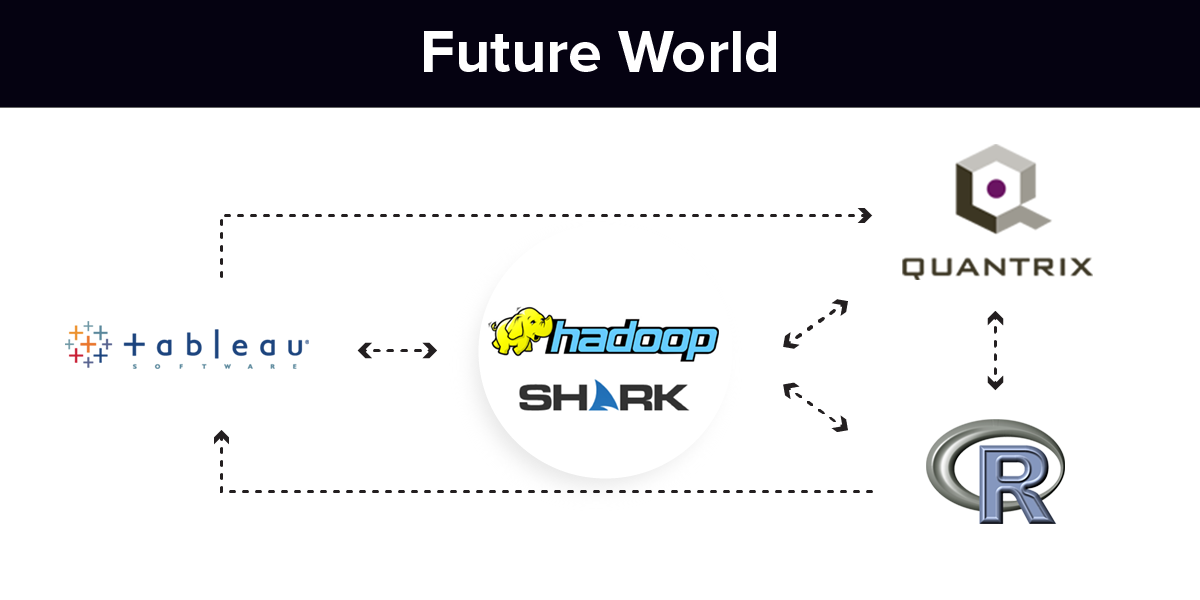

What would happen if Hadoop can self-generate performant schema? This could eliminate the need for the column store or automatically render schema in the column store. The vision is this:

- Capture all of your data in Hadoop

- Decide what you want to analyze by making selections

- Hadoop self-generates performant schema (wherever)

- Tableau is used for analysis

Hadoop is still riding the crest of the hype cycle, but the Hadoop ecosystem shows a lot of promise. Hadoop running in conjunction with Hive/Spark/Shark, Impala or any of the other new inventions to make Hadoop a faster source for analysis are becoming viable options. The Hadoop ecosystem will only get better over the next five years.

The world will change when non-technical users can make selections of dimensions and measures that they wish to analyze and the (BI ecosystem) can self-generate performant schema with good data.

The work for technical folks will be to do everything possible to secure proprietary information, manage the store, provide the necessary cloud access or hardware and insure data quality – all very quickly.

It’s an exciting time to be in data. All of this will happen. I may not have all of the pieces nailed, but I’m certain we are going to get there in this decade. What happens when a one-person company and collect, analyze, visualize and understand massive data, probably stored in the cloud?

Business models will be re-written. The world will change.